How to Turn Reddit Discussions into Valuable Datasets

Reddit hosts millions of conversations across thousands of communities, making it one of the richest sources of user-generated content on the internet. With the right approach, these discussions can be transformed into high-quality, structured datasets for research, analytics, and machine learning. This article explains how Reddit content can be modeled as data, how to prepare it for analysis, and how tools like RedScraper can streamline the extraction process.

Why Reddit Is a Powerful Data Source

Reddit is organized into topic-focused communities, or subreddits, where people share posts, comment, upvote, and discuss. This structure provides several advantages for data work:

- Topical segmentation: Subreddits allow you to focus on specific domains (e.g., finance, health, programming, hobbies).

- Rich text content: Posts and comments often contain long-form opinions, explanations, stories, and arguments.

- Engagement signals: Upvotes, downvotes, awards, and replies provide behavioral data related to quality, interest, or controversy.

- Temporal patterns: Timestamps capture how discussions evolve over time, enabling time-series and trend analysis.

Core Elements of a Reddit Dataset

To turn Reddit discussions into a useful dataset, you first need to understand the main building blocks of the platform and how they translate into structured data.

Posts (Submissions)

Posts are the starting point of most Reddit conversations. In a dataset, each post commonly becomes one row in a table with columns such as:

- post_id: Unique identifier for the post.

- subreddit: Community where the post is published.

- author: Username or anonymized user ID.

- title: Short text summarizing the post.

- body: Full text of the submission (if any).

- url / media_url: Link to external content (images, videos, articles).

- created_utc: Timestamp of creation in UTC.

- score: Net upvotes (upvotes minus downvotes).

- num_comments: Number of comments.

- flair: Any tags or labels applied to the post.

- is_self / is_media: Indicators of content type (text-only, image, link, etc.).

Comments

Comments form the conversation tree beneath each post. A comments dataset usually includes:

- comment_id: Unique identifier for the comment.

- parent_id: ID of the parent item (post or comment).

- post_id: ID of the root post. This allows you to tie comments back to the original submission.

- author: Username or anonymized user ID.

- body: Text of the comment.

- created_utc: Timestamp of creation.

- score: Net upvotes.

- depth: Level of nesting within the thread.

By storing the parent-child relationships between comments, you can reconstruct discussion trees, measure thread depth, and analyze reply patterns.

User-Level Data

User data is more sensitive, so it should be handled with strict privacy and ethical standards. In many cases, you do not need identifiable information; instead, you can work with pseudonymous or hashed user IDs. Typical user-level features include:

- user_id (or hashed username): Consistent identifier across posts and comments in the dataset.

- karma metrics: Aggregated scores such as total post karma or comment karma (if available through your source).

- activity counts: Number of posts, comments, or interactions per subreddit or per time period.

- participation diversity: Number and types of subreddits a user participates in.

These features are useful for studying user behavior, community engagement, or for building features in recommendation and classification models.

From Raw Reddit Data to Structured Tables

Once you have access to raw Reddit data, the next step is to normalize it into clean, well-defined tables. A typical structure might include:

- posts table (one row per post)

- comments table (one row per comment)

- users table (one row per user, anonymized as needed)

- subreddits table (metadata about each community)

- interactions table (optional: records of user-level actions like posting, commenting, or awarding)

Typical Normalization Steps

- Identify primary keys: Ensure each entity (post, comment, user) has a unique, stable ID.

- Separate entities: Split raw JSON or API responses into distinct tables for posts, comments, users, and subreddits.

- Map relationships: Use foreign keys (e.g., post_id, parent_id, user_id) to link tables.

- Standardize timestamps: Convert all time fields into a consistent format and timezone (commonly UTC).

- Clean text fields: Remove or normalize HTML, markdown, emojis, and links as needed for your analysis or model.

Using RedScraper to Extract Reddit Datasets

Collecting data manually via the Reddit API can be time-consuming, especially when you want to gather large, historical, or multi-subreddit datasets. This is where dedicated tools such as RedScraper are useful. They can:

- Automate the extraction of posts and comments across specified subreddits, time ranges, or search queries.

- Handle pagination, rate limits, and retry logic in the background.

- Export data directly into formats like CSV, JSON, or database-ready tables.

- Preserve key metadata (scores, flairs, author names, and timestamps) needed for robust analysis.

By using such a tool, you can focus less on data collection logistics and more on how to clean, model, and analyze your Reddit dataset.

Cleaning and Preprocessing Reddit Text

Reddit text is informal, noisy, and often includes slang, emojis, links, and formatting. For research and machine learning, careful preprocessing improves data quality:

- Text normalization: Lowercasing, removing extra whitespace, and optionally stripping punctuation.

- Handling URLs and mentions: Replacing links and user mentions with standardized tokens (e.g., <URL>, <USER>).

- Removing boilerplate: Filtering out common signatures, bots messages, or automated replies if they are not relevant to your task.

- Language filtering: Keeping only text in desired languages for more consistent analysis.

- Tokenization and lemmatization: Preparing text for NLP tasks by splitting it into tokens and reducing words to their base forms.

The extent of cleaning depends on your end goal. For example, sentiment analysis might tolerate more noise than fine-grained linguistic research, which may require more aggressive normalization.

Organizing Reddit Data for Research

For academic or applied research, your Reddit dataset should be organized in a way that is easy to document, replicate, and share (within ethical and legal boundaries). Consider:

- Clear schema definitions: Document all fields, data types, and possible values.

- Versioning: Track when and how the dataset was collected, including subreddits, date ranges, and filters.

- Metadata files: Store information about collection methods, tools used (such as RedScraper), and any preprocessing steps.

- Split datasets: If needed, create separate subsets for training, validation, and testing for machine learning use cases.

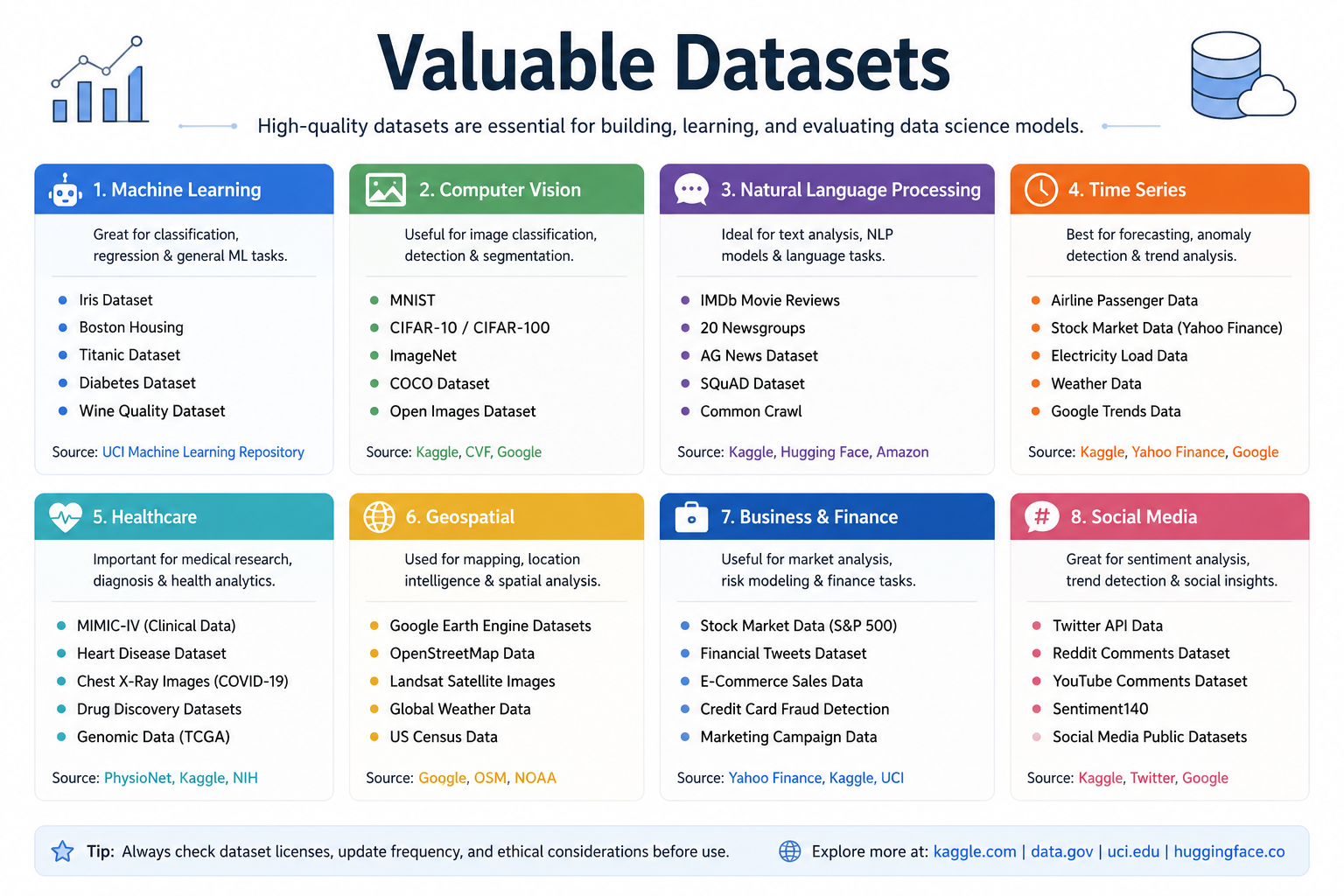

Analytics and Machine Learning Use Cases

Once your Reddit discussions are organized into a structured dataset, you can apply a wide range of analytical and machine learning techniques:

Descriptive and Exploratory Analytics

- Trend analysis: Track how discussion frequency or sentiment about a topic changes over time.

- Community comparison: Compare behavior, language, or engagement metrics across subreddits.

- User behavior: Study posting and commenting patterns, response times, or loyalty to specific communities.

Natural Language Processing (NLP)

- Sentiment analysis: Classify posts and comments as positive, negative, or neutral toward a topic, brand, or policy.

- Topic modeling: Discover recurring themes and topics discussed in large collections of posts.

- Stance and opinion mining: Analyze how people position themselves with respect to controversial issues.

- Summarization: Automatically generate summaries of long discussion threads or recurring debates.

Predictive Modeling

- Engagement prediction: Predict which posts are likely to receive high scores or many comments.

- Recommendation systems: Recommend subreddits, posts, or content based on user behavior and preferences.

- Moderation tools: Detect spam, harassment, or policy-violating content using classification models.

Ethical and Legal Considerations

Working with Reddit data comes with responsibilities. Even though many conversations are public, you must respect user privacy, platform rules, and legal frameworks.

- Terms of service and API policies: Ensure that your collection methods (including the use of tools like RedScraper) comply with Reddit’s current rules.

- Anonymization: Remove or hash usernames and avoid combining datasets in ways that could re-identify individuals.

- Content sensitivity: Some subreddits discuss health, trauma, or other sensitive issues. Handle such data with extra care and, when appropriate, seek ethics approvals.

- Informed use: Avoid using Reddit datasets for harmful, manipulative, or deceptive purposes.

Best Practices for Building High-Quality Reddit Datasets

- Define your goal first: Clarify whether your focus is sentiment, topic modeling, behavior analysis, or another task; this guides what data you collect.

- Select relevant subreddits: Choose communities that align with your research domain or application area.

- Sample carefully: Decide whether you need complete coverage of certain time windows or a representative sample across a longer period.

- Track data lineage: Record how each part of your dataset was obtained and processed for reproducibility.

- Iterate: Start with a smaller extract, refine your schema and preprocessing, then scale up once the pipeline works well.

Conclusion

Reddit discussions can be transformed into rich, structured datasets that fuel research, analytics, and machine learning. By breaking down posts, comments, and user interactions into well-designed tables, applying thoughtful preprocessing, and leveraging tools like RedScraper for efficient data extraction, you can unlock deep insights into online communities and human behavior. With proper attention to ethics, privacy, and documentation, Reddit-based datasets become a powerful resource for understanding and modeling the digital public sphere.